Optimization and Solving Equations in Matlab

Are you interested in learning how to optimize and solve equations in Matlab? Whether you are a beginner or a seasoned Matlab user, understanding optimization and equation solving methods can greatly benefit your workflow. In this blog post, we will explore the various tools and techniques available in Matlab for optimization and solving equations. From understanding the basics of optimization to implementing linear programming and nonlinear equation solving methods, we will cover it all. Additionally, we will delve into the common optimization algorithms and the Optimization Toolbox in Matlab. By the end of this post, you will have a solid understanding of how to efficiently optimize and solve equations using Matlab. Let’s dive in and explore the world of optimization and equation solving in Matlab.

Introduction to Optimization in Matlab

Optimization in Matlab is a powerful tool for solving complex engineering and scientific problems. It involves finding the best solution to a problem from a set of possible solutions, often while minimizing or maximizing an objective function. In this blog post, we will explore the basics of optimization in Matlab and how it can be used to tackle real-world problems.

One of the key features of optimization in Matlab is the ability to handle both linear and nonlinear optimization problems. This makes it a versatile tool for a wide range of applications, from designing engineering systems to financial modeling. By using optimization algorithms built into Matlab, engineers and scientists can efficiently find the best solution to their problems.

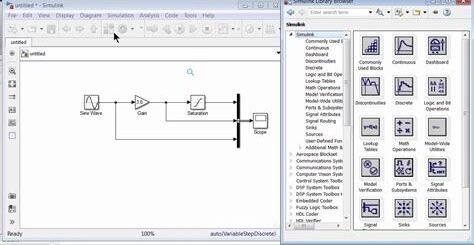

Furthermore, Matlab provides an optimization toolbox that includes a variety of specialized algorithms for different types of optimization problems. These algorithms are designed to handle specific constraints and objectives, making it easier to find the optimal solution to complex problems. Whether it’s linear programming, nonlinear equation solving, or other optimization tasks, Matlab’s optimization toolbox has the tools to get the job done.

Overall, the introduction to optimization in Matlab lays the groundwork for understanding its capabilities and applications. From basic optimization algorithms to the specialized optimization toolbox, Matlab provides the resources needed to solve a wide range of real-world problems in engineering, science, and beyond.

Common Optimization Algorithms in Matlab

When working with optimization problems in Matlab, it’s important to understand the common optimization algorithms that are frequently used. These algorithms are essential for finding the best solution to a given problem, whether it’s maximizing profits, minimizing costs, or achieving the best possible outcome in any other scenario.

One of the most commonly used optimization algorithms in Matlab is the gradient descent method. This algorithm is used to minimize a function by iteratively moving in the direction of the steepest descent. It’s a popular choice for solving unconstrained optimization problems and is relatively easy to implement in Matlab.

Another widely used algorithm is the Newton’s method, which is particularly effective for finding roots of nonlinear equations. This method uses the first and second derivatives of a function to quickly converge on the optimal solution. Matlab’s built-in functions make it easy to apply Newton’s method to a wide range of optimization problems.

Genetic algorithms are also frequently employed in Matlab for optimization purposes. These algorithms are inspired by the process of natural selection and can be used to solve complex, multi-variable optimization problems. By simulating the process of evolution, genetic algorithms are able to find high-quality solutions to a variety of optimization tasks.

Implementing Linear Programming in Matlab

Linear programming (LP) is a method used to achieve the best outcome (such as maximum profit or lowest cost) in a mathematical model whose requirements are represented by linear relationships. In the context of Matlab, implementing linear programming involves using specific functions and tools to solve optimization problems with linear objective functions and linear inequality constraints.

One of the key components of implementing linear programming in Matlab is the use of the linprog function, which is part of the Optimization Toolbox. This function allows users to solve LP problems by specifying the objective function coefficients, the inequality constraints, and the bounds on the decision variables.

Additionally, when implementing linear programming in Matlab, it is important to understand the structure of the LP problem and how to represent it using the appropriate syntax and data structures. This may involve defining decision variables, formulating the objective function, and specifying the constraints in a way that can be interpreted by the linprog function.

Furthermore, Matlab provides a range of examples and documentation to support users in implementing linear programming. These resources include sample problems, step-by-step guides, and theoretical explanations that can help beginners and experienced users alike in understanding and applying LP techniques in the Matlab environment.

Nonlinear Equation Solving Methods in Matlab

When it comes to solving nonlinear equations in Matlab, there are several methods that can be used to find the roots of these equations. One of the most commonly used methods is the Newton-Raphson method, which is an iterative technique for finding the roots of a nonlinear equation. The basic idea behind the Newton-Raphson method is to make a guess at the value of the root and then use this guess to update the guess in each iteration until the desired level of accuracy is achieved.

Another popular method for solving nonlinear equations in Matlab is the Broyden’s method, which is based on the secant method for finding the roots of a nonlinear equation. The Broyden’s method is particularly useful for solving systems of nonlinear equations, as it can be used to find the Jacobian matrix of the system and then solve for the roots of the equations.

In addition to these methods, Matlab also provides other built-in functions such as fsolve, which can be used to solve systems of nonlinear equations. The fsolve function uses a trust-region algorithm to find the roots of the equations and can be particularly useful for solving complex systems of nonlinear equations.

Overall, Matlab provides a wide range of tools and methods for solving nonlinear equations, making it a powerful tool for engineers and scientists working in fields such as control systems, robotics, and optimization.

Optimization Toolbox in Matlab

The Optimization Toolbox in MATLAB provides a comprehensive set of algorithms for solving optimization problems. These algorithms cover a wide range of problem types, including linear programming, quadratic programming, integer programming, and nonlinear optimization. The toolbox also includes algorithms for constrained and unconstrained optimization, as well as for solving systems of nonlinear equations.

One of the key features of the Optimization Toolbox is its ability to handle large-scale optimization problems efficiently. This is critical for many real-world applications where the number of variables and constraints can be substantial. The toolbox employs state-of-the-art optimization techniques, such as interior-point methods and active-set methods, to provide efficient and reliable solutions.

In addition to its core optimization capabilities, the Optimization Toolbox also includes specialized algorithms for specific problem types, such as mixed-integer linear programming and global optimization. These specialized algorithms are designed to handle the unique challenges posed by these types of problems, and provide high-quality solutions in a reasonable amount of time.

Overall, the Optimization Toolbox in MATLAB is a powerful and versatile tool for solving a wide range of optimization problems. Whether you are a researcher, engineer, or data scientist, the toolbox provides the algorithms and features you need to tackle complex optimization problems effectively and efficiently.